all of works

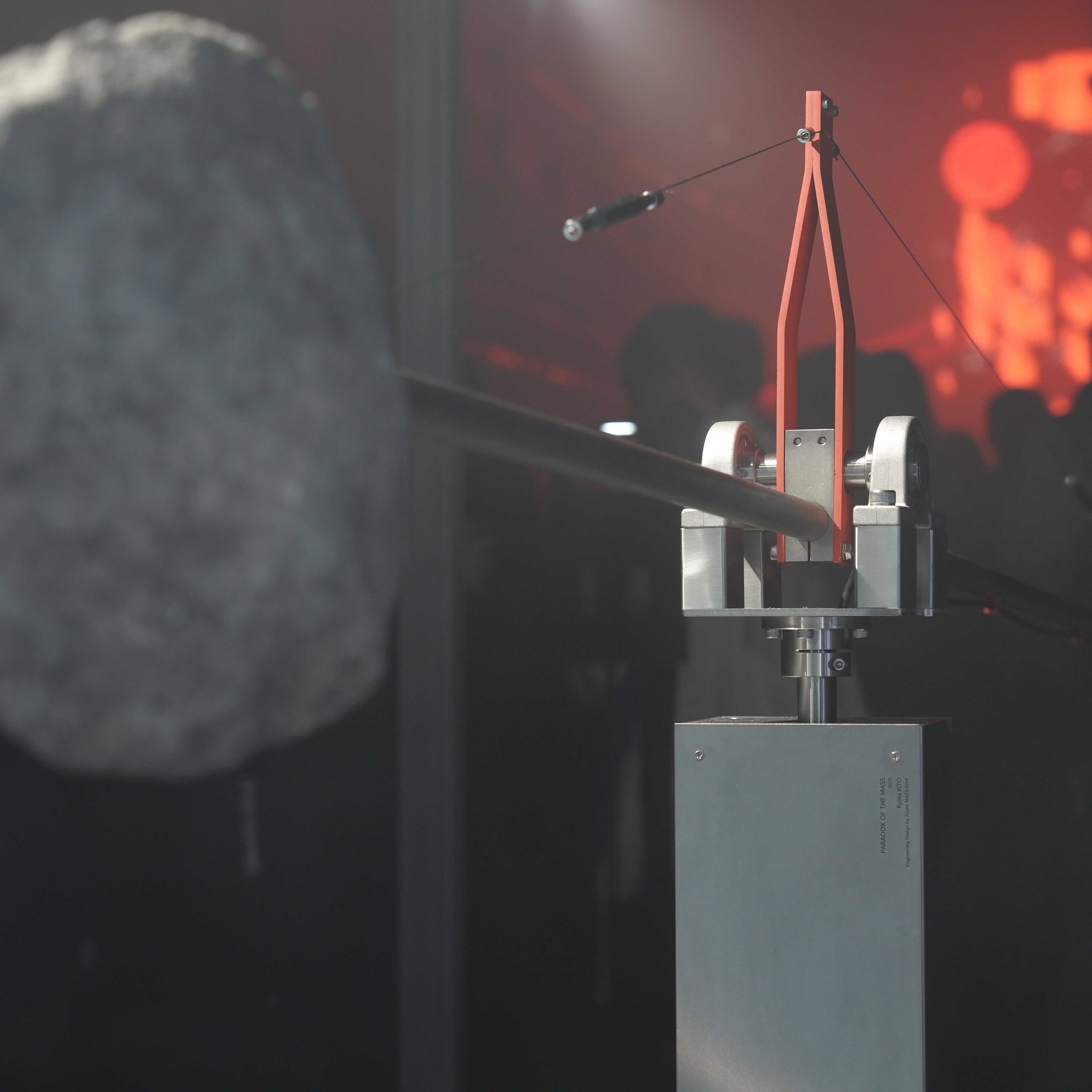

Paradox of the Mass

This work consists of a stone, a motor-driven propeller, and an intricately crafted pivot that supports them. Through precise mechanical design and careful adjustment of the center of gravity, it achieves gentle movement of the stone with minimal force. By essentially deconstructing the inherent attribute of mass that matter possesses, it offers a novel visual experience derived from the familiar presence of stone.

exhibition:

-

Mugen Room by Air Max DN8

Paradox of the Mass

Ryota Kito + Azumi Maekawa

Sankei-Shimbun Koto Center, Tokyo, Japan

2025

close

Redirected Remapping

This study investigates how both the body part used to control a VR avatar and the avatar's appearance affect redirection detection thresholds. We conducted experiments comparing hand and foot manipulation of two types of avatars: a hand-shaped avatar and an abstract spherical avatar. Our results show that, irrespective of the body part used, the redirection detection threshold increased by 21% when using the hand avatar compared to the abstract avatar. Additionally, when the avatar's position was redirected toward the body midline, the detection threshold increased by 49% compared to redirection away from the midline. No significant differences in detection thresholds were observed between the hand and foot manipulations. These findings suggest that avatar appearance and redirection direction significantly influence user perception in VR environments, offering valuable insights for the design of full-body VR interactions and human augmentation systems.

publication:

-

Ryutaro Watanabe*, Azumi Maekawa*, Michiteru Kitazaki, Yasuaki Monnai, Masahiko Inami."Redirection Detection Thresholds for Avatar Manipulation with Different Body Parts." IEEE Transactions on Visualization and Computer Graphics (2025). (* These authors contributed equally.)

[pdf] [doi]

close

Stone Contours in Light

A work that visualizes and amplifies the natural undulations and irregularities of a stone through reflected light. In contrast to homogenized and standardized artificial objects, the stone's inherently amorphous form influences the reflection of light, creating a unique rhythm and landscape within the space.

close

Behind the Game

STAMPER enables drummers to generate innovative performances by controlling multiple bass drums. STAMPER consists of several “Actuated Pedals (APs),” bass drum pedals embedded with an EC motor, paired with bass drums, and a machine vision system. The APs are used both as input and output. One AP in input mode senses the position of the pedal being used by the drummer. Other APs in actuated mode respond to the input mode AP’s motion. Machine vision is used to monitor the drummer’s movements to control the behavior of the actuated APs. In this paper, we describe the current prototype of STAMPER and what kind of drum performances can be achieved with the system by reporting a study conducted with an experienced drummer.

publication:

-

Daisuke Uriu, Shuta Iiyama, Taketsugu Okada, Takumi Handa, Azumi Maekawa, Masahiko Inami. "STAMPER: Human-machine Integrated Drumming" In Extended Abstracts of the 2023 CHI Conference on Human Factors in Computing Systems (CHI EA '23), Article 259, pp. 1-5.

close

Behind the Game

Technological advancement has opened up opportunities for new sports and physical activities. We introduce a concept called machine-mediated teaming, in which a human and a surrogate machine form a team to participate in physical sports games. To understand the experience of machine-mediated teaming and the guidelines for designing the system to achieve the concept, we built a case study system based on tug-of-war. Our system is a sports game played by two against two. One team consists of a player who actually pulls the rope and another player who participates in the physical game by controlling the machine’s actuators. We conducted user studies using this system to investigate the sport experience in this form and to reveal insights to inform future research on machine-mediated teaming. Based on the data obtained from the user studies, we clarified three perspectives, machine stamina, action space, and explicit feedback, that should be considered when designing future machine-mediated teaming systems. The research presented in this paper offers a first step towards exploring how humans and machines can coexist in highly dynamic physical interactions.

publication:

-

Azumi Maekawa, Hiroto Saito, Daisuke Uriu, Shunichi Kasahara, Masahiko Inami. "Machine-Mediated Teaming: Mixture of Human and Machine in Physical Gaming Experience." In Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems, ACM, 2022

close

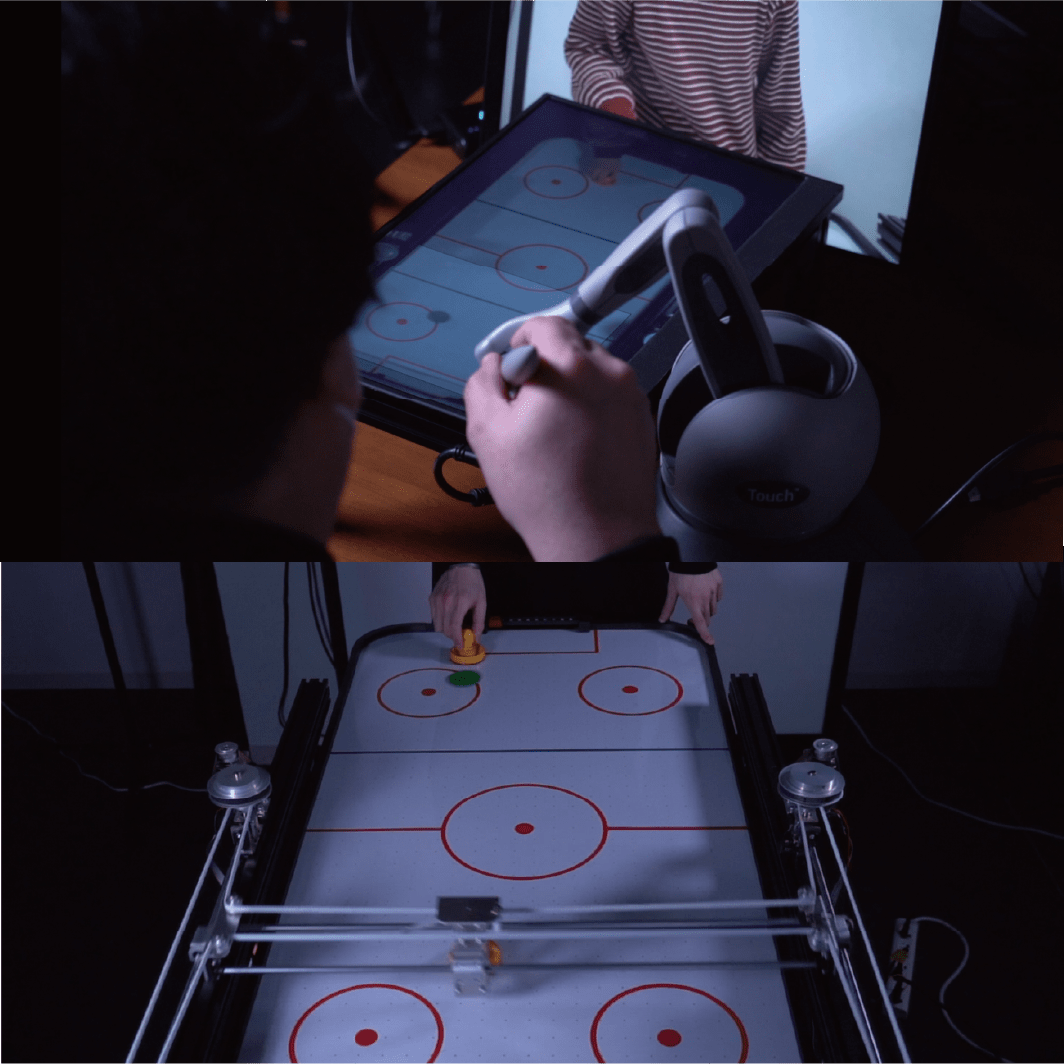

Behind the Game

When playing inter-personal sports games remotely, the time lag between user actions and feedback decreases the user’s performance and sense of agency. While computational assistance can improve performance, naive intervention independent of the context also compromises the user’s sense of agency. We propose a context-aware assistance method that retrieves both user performance and sense of agency, and we demonstrate the method using air hockey (a two-dimensional physical game) as a testbed. Our system includes a 2D plotter-like machine that controls the striker on half of the table surface, and a web application interface that enables manipulation of the striker from a remote location. Using our system, a remote player can play against a physical opponent from anywhere through a web browser. We designed the striker control assistance based on the context by computationally predicting the puck’s trajectory using a real-time captured video image. With this assistance, the remote player exhibits an improved performance without compromising their sense of agency, and both players can experience the excitement of the game.

publication:

-

Azumi Maekawa, Hiroto Saito, Narin Okazaki, Shunichi Kasahara, and Masahiko Inami. "Behind The Game: Implicit Spatio-Temporal Intervention in Inter-personal Remote Physical Interactions on Playing Air Hockey." ACM SIGGRAPH 2021 Emerging Technologies, ACM, 2021.

close

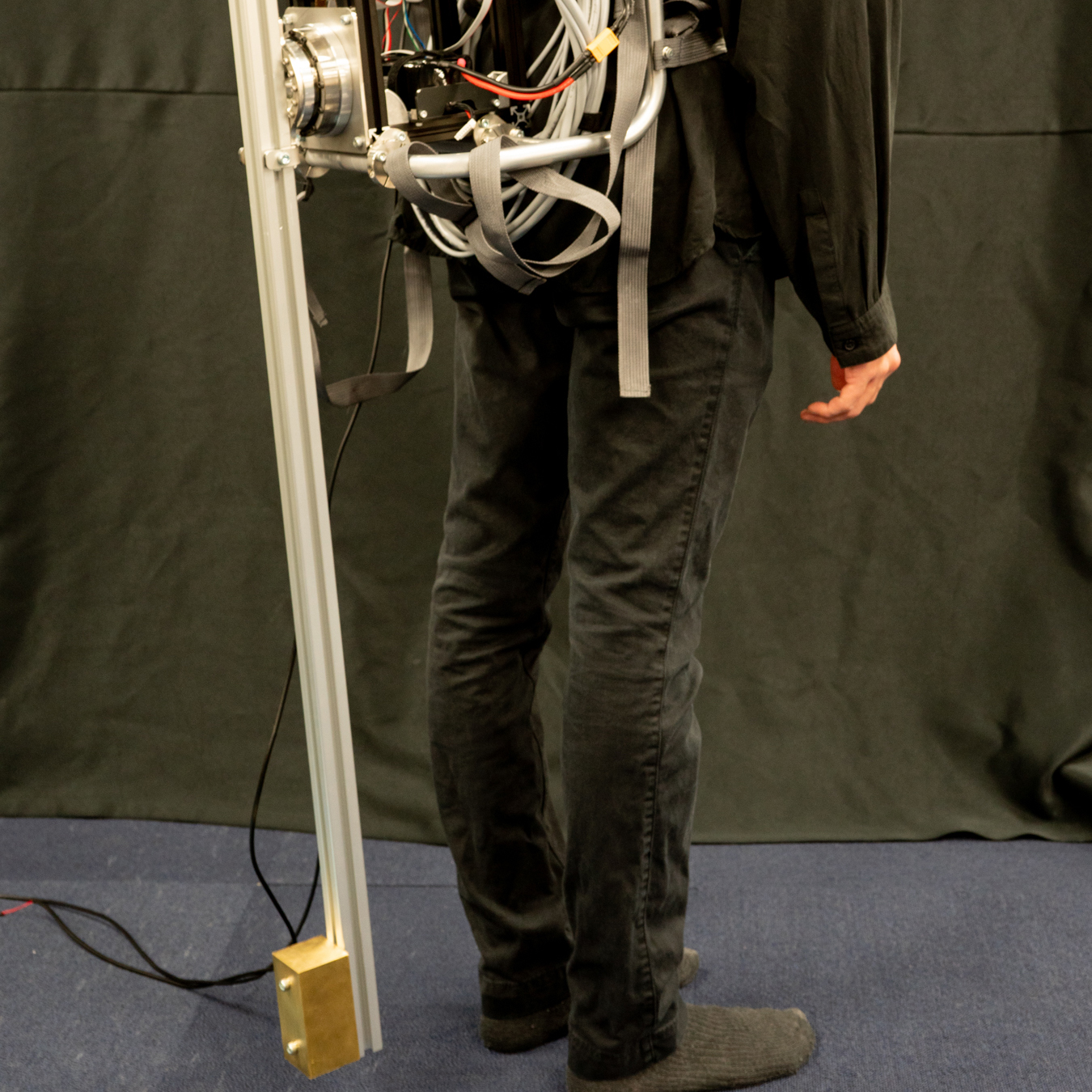

Tail-like Balancer

A reduced balance ability can lead to falls and critical injuries. To prevent falls, humans use reaction forces and torques generated by swinging their arms. In animals, we can find that a similar strategy is taken using tails. Inspired by these strategies, we propose an approach that utilizes a robotic appendage as a human balance supporter without assistance from environmental contact. As a proof of concept, we developed a wearable robotic appendage that has one actuated degree of freedom and rotates around the sagittal axis of the wearer. To validate the feasibility of our proposed approach, we conducted an evaluation experiment with human subjects. Controlling the robotic appendage we developed improved the subjects' balance ability and enabled the subject to withstand up to 22.8% larger impulse disturbances on average than in the fixed appendage condition.

publication:

-

Azumi Maekawa, Kei kawamura, and Masahiko Inami. "Dynamic Assistance for Human Balancing with Inertia of a Wearable Robotic Appendage." In 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS)

close

The Tight Game

We propose a novel body-centered interaction system making use of a spherical camera attached to a hand. Its broad and unique field of view enables an all-in-one approach to sensing multiple pieces of contextual information in hand-based spatial interactions: (i) hand location on the body surface, (ii) hand posture, (iii) hand keypoints in certain postures, and (iv) the near-hand environment. The proposed system makes use of a deep-learning approach to perform hand location and posture recognition. The proposed system is capable of achieving high hand location and posture recognition accuracy, 85.0 % and 88.9 % respectively, after collecting sufficient data and training. Our result and example demonstrations show the potential of utilizing 360° cameras for vision-based sensing in context-aware body-centered spatial interactions.

publication:

-

Riku Arakawa, Azumi Maekawa, Zendai Kashino, Masahiko Inami. "Hand with Sensing Sphere: Body-Centered Spatial Interactions with a Hand-Worn Spherical Camera." In Symposium on Spatial User Interaction, pp. 1-10.

close

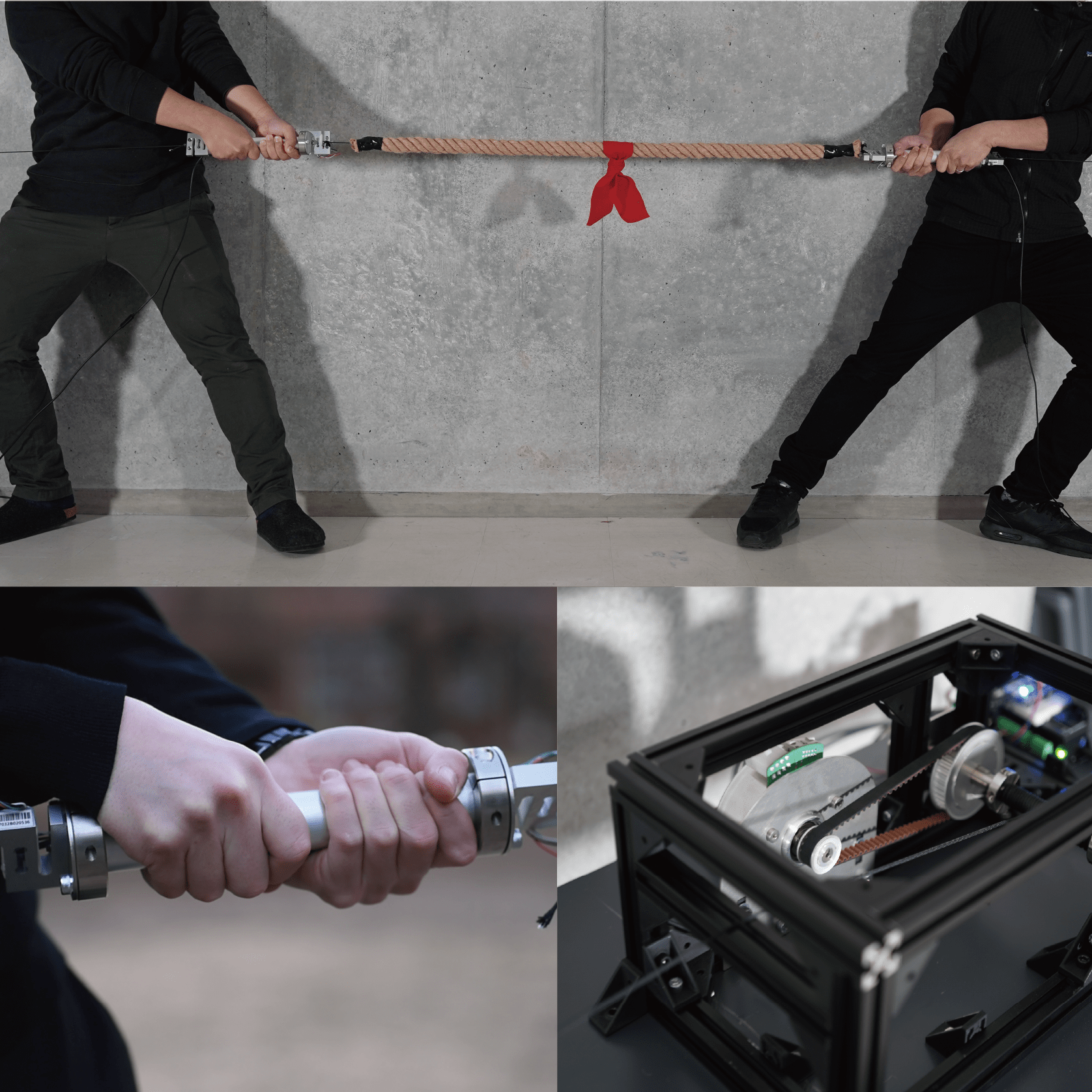

The Tight Game

Physical assistance can alleviate individual differences of abilities between players to create well-balanced inter-personal physical games. However, ‘explicit’ intervention can ruin the players’ sense of agency, and cause a loss of engagements in both the player and audience. We propose an implicit physical intervention system ”The Tight Game” for ‘Tug of War’ a one-dimensional physical game. Our system includes four force sensors connected to the rope and two hidden high torque motors, which provide realtime physical assistance. We designed the implicit physical assistance by leveraging human recognition of the external forces during physical actions. In The Tight Game, a pair of players engage in a tug of war, and believe that they are participating in a well balanced, tight game. In reality, however, an external system or person mediates the game, performing physical interventions without the players noticing.

publication:

- Azumi Maekawa, Shunichi Kasahara, Hiroto Saito, Daisuke Uriu, Ganesh Gowrishankar, and Masahiko Inami. "The Tight Game: Implicit Force Intervention in Inter-personal Physical Interactions on Playing Tug of War." ACM SIGGRAPH 2019 Emerging Technologies, ACM, 2020.

close

PickHits

Experiences of hitting targets cause a great feeling. We propose a system for generating this experience computationally. This system consists of external tracking cameras and a handheld device for holding and releasing a thrown object. As a proof-of-concept system, we developed the system based on two key elements: low-latency release device and constant model-based prediction. During the user’s throwing motion, the ballistic trajectory of the thrown object is predicted in real time, and when the trajectory coincides with the desired one, the object is released. We found that we can generate a computational hitting experience within a limited range space..

publication:

- Azumi Maekawa, Seito Matsubara, Atsushi Hiyama, and Masahiko Inami. "PickHits: Hitting Experience Generation with Throwing Motion via a Handheld Mechanical Device." ACM SIGGRAPH 2019 Emerging Technologies. ACM, 2019.

credit:

Azumi Maekawa, Seito Matsubara

close

Stand

The hardware design of the robots usually reflects its purpose. For example, leg for walking, arm for reaching, hand for grasping, etc. The function of mechanical systems are explicitly defined by designers and the simple shape consisting of straight lines or circular arcs is reasonable for its clarified function. On the other hand, our real environment is full of diverse shapes. The complex shape has been formed by various factors, biological growth, aging, weathering and so on. Utilizing diverse objects to create robots, we might be able to see behaviors and motions we’ve never seen. We aim to find unpredictable motions we could not discover with simple shapes.

In this work, as a primitive function of the robot, we focus on stand-up behavior. For the materials that bricolage the robot, we select tree branches as objects with diverse shapes. The robots' poses are generated aiming to maximize the height of the bodies. Robots with diverse body shapes change their pose by repeating trial and error in real time. Through the process of learning, this work portrays new functions and meanings given to commonplace objects.

credit:

Azumi Maekawa

close

Naviarm

We present a wearable haptic assistance robotic system for augmented motor learning called Naviarm. This system comprises two robotic arms that are mounted on a user’s body and are used to transfer one person’s motion to another offline. Naviarm pre-records the arm motion trajectories of an expert via the mounted robotic arms and then plays back these recorded trajectories to share the expert’s body motion with a beginner. The Naviarm system is an ungrounded system and provides mobility for the user to conduct a variety of motions. We focus on the temporal aspect of motor skill and use a mime performance as a case study learning task. We verified the system effectiveness for motor learning using the conducted experiments. The results suggest that the proposed system has benefits for learning sequential skill.

publication:

- Azumi Maekawa, Shota Takahashi, MHD Yamen Saraiji, Sohei Wakisaka, Hiroyasu Iwata, and Masahiko Inami. "Naviarm: Augmenting the Learning of Motor Skills using a Backpack-type Robotic Arm System." In Proceedings of the 10th Augmented Human International Conference 2019, p. 38. ACM, 2019

- Azumi Maekawa, Shota Takahashi, MHD Yamen Saraiji, Sohei Wakisaka, Hiroyasu Iwata, and Masahiko Inami. "Demonstrating Naviarm: Augmenting the Learning of Motor Skills using a Backpack-type Robotic Arm System." In Proceedings of the 10th Augmented Human International Conference 2019, p. 48. ACM, 2019.

credit:

Azumi Maekawa, Shota Takahashi, MHD Yamen Saraiji, Sohei Wakisaka, Hiroyasu Iwata, and Masahiko Inami

close

Photo by Yasushi Kato

Walk

This project aims at creating bricolages of robots out of tree branches found at hand. Through the process in which natural objects learn how to walk by themselves, the artwork portrays the perspectives of objects. Unlike the top-down process where functions of mechanical systems are explicitly defined by designers, this project puts an emphasis on the emergence of functions, which is a bottom-up process where found objects seek for the function as a whole.

exhibition:

- Parametric Move, 2018

- iii Exhibition 19 “WYSIWIG?” , 2017

media:

- 美術手帳, Aug, 2018

credit:

Azumi Maekawa, Jun Hatori, Shunta Saito, Hironori Yoshida, Ayaka Kume

close

Photo by Yasushi Kato

Arial-Biped

There has been a desire to create robots that are capable of life-like motions and behaviors since ancient times. As a life-like motion, we focus on the bipedal walking, which is one of the key features of a legged robot. Movement of the legged robot is highly restricted by gravity. When its body shape is defined, the possible walking gait is almost determined accordingly. Therefore, it is difficult to realize arbitrary body design and dynamic walking motion with light steps like living things in a legged robot. Aerial-Biped is a prototype for exploring a new experience with a physical biped robot. In this work, using a quadrotor, we aim to separate the body shape design and motion design. By releasing the biped robot from gravity, it can relax the limitation of the robot's physical motion. The motion of Aerial-Biped is created in real time according to the movement of the quadrotor by the motion generator learned using deep reinforcement learning. We can observe various gaits emerge based on the successively changing quadrotor's movements.

publication:

- Azumi Maekawa, Ryuma Niiyama, and Shunji Yamanaka. "Pseudo-Locomotion Design with a Quadrotor-Assisted Biped Robot." In 2018 IEEE International Conference on Robotics and Biomimetics (ROBIO), pp. 2462-2466. IEEE, 2018.

- Azumi Maekawa, Ryuma Niiyama, and Shunji Yamanaka. "Aerial-biped: a new physical expression by the biped robot using a quadrotor." ACM SIGGRAPH 2018 Emerging Technologies. ACM, 2018.

exhibition:

- Parametric Move, 2018

media:

- IEEE Spectrum, 13 Aug, 2018

- Reuters, 2 Oct, 2018

- ASCII, 17 Jul, 2018

- designboom, 14 Aug, 2018

- TechCrunch, 14 Aug, 2018

credit:

Azumi Maekawa, Shunji Yamanaka

close